|

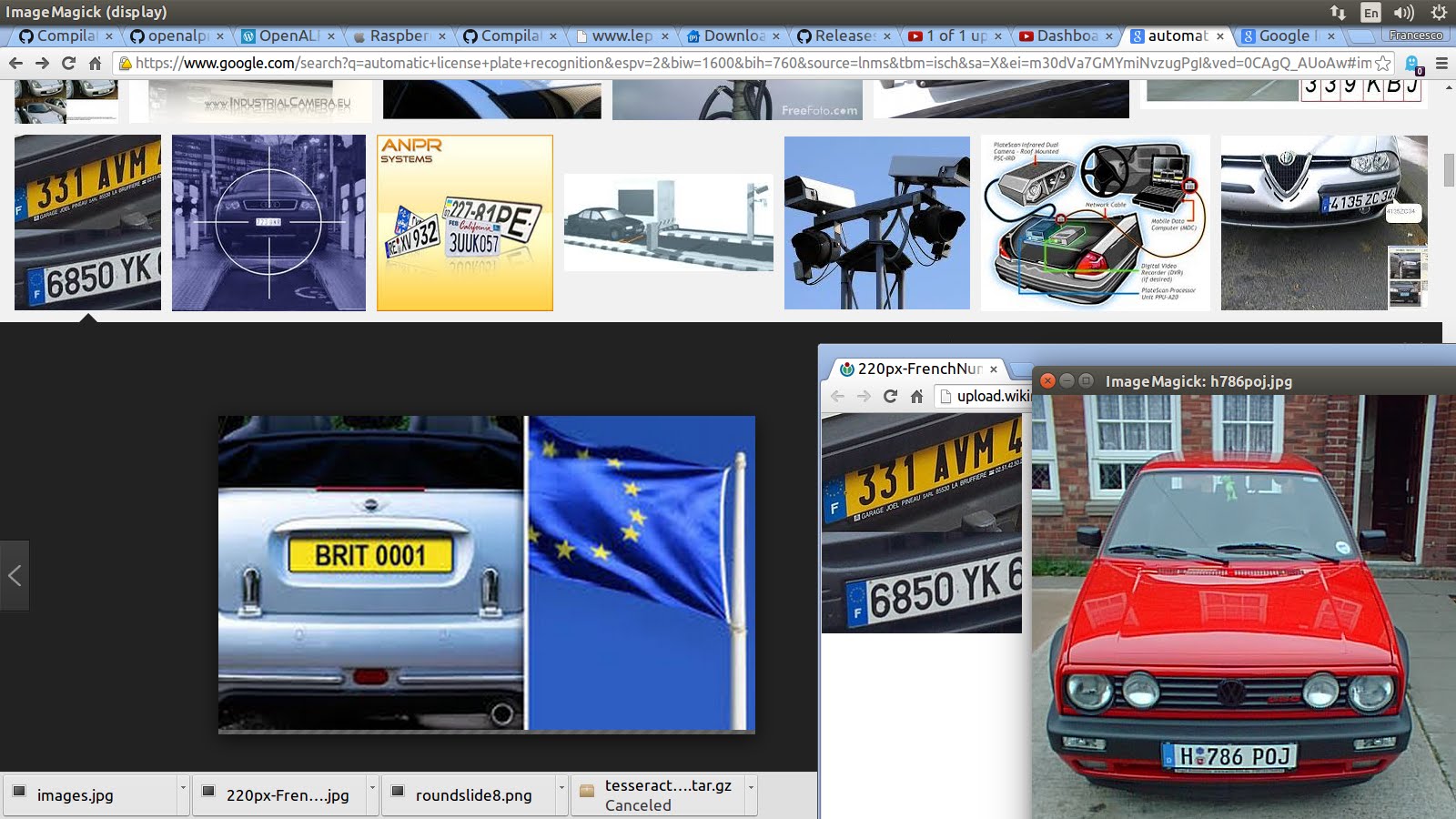

10/13/2019 Automatic License Plate Recognition Using Python And Open Cv For Image DetectionRead Now

OpenALPR is an open source Automatic License Plate Recognition library written in C++ with bindings in C#, Java, Node.js, Go, and Python. The library analyzes images and video streams to identify license plates. Automatic License Plate Recognition Using OpenCV and Neural Network Sweta Kumari, Leeza Gupta, Prena Gupta. Recognizing the image license plate, country name is load as source image. Then the first image entry. CV_THRESH_BINARY); 4. Edge detection.

Is a reddit for discussion and news about Guidelines. Please keep submissions on topic and of high quality. Just because it has a computer in it doesn't make it programming. If there is no code in your link, it probably doesn't belong here. Direct links to app demos (unrelated to programming) will be removed. No surveys.

Please follow proper. Info. Do you have a question?.

Do you have something funny to share with fellow programmers? Please take it to. For posting job listings, please visit. Check out our.

It could use some updating. Are you interested in promoting your own content? Related reddits. Any explanations on what the 5x5 convolve is? Traditionally a 5x5 convolve meant a 5x5 convolution kernel; but i see no mention of what the 5x5 kernel is.

And after applying the 5x5 convolution kernel filter to the 128px x 64px input image, how did that result in 48 images? What do the 48 images represent? How is image #3 different from image #2 different from image #1 different from image #48? What's a maxpool? It sounds like a 2x2 convolution kernel, but this time it decimates the images from 128x64 down to 64x32. Is it decimating the images down from 128x64 to 64x32?

Then you apply a 5x5 convolution to the forty-eight 64x32 images, but this time rather than increasing the number of images by a factor of 48 (1 image - 48 images in the first 5x5 convolution), it increases the number of images by 133.3% (48 images - 64 images in the second 5x5 convolution). Why the different number of new images? What do the new images represent? Then there's a 'maxpool' operation, which i wish it said what that was.

Then there's another 5x5 convolution, and this time it doubles the number of images (64 - 128). Why does the same operation generate different number of images? Which really brings me back to my first question: what are these different images? What is the 5x5 convolution doing that generates new images? How does it generate new images?

How does it decide how many new images to create? Correct, with each layer you get an increasing number of images (or channels to use the nomenclature). Pooling ensures that there aren't an excessive number of total nodes in each convolutional layer. Roughly speaking each channel represents a particular feature, and as you get deeper the features represented become more 'higher level' (for example, the lowest level features might identify edges of particular orientations, whereas the higher level features might identify letters). The final fully connected layers operate on the high level feature maps to give the desired output.

They are fully connected in the sense that each 'pixel' in each feature map in the final convolutional layer is connected to each of the 2048 nodes in the second to last layer. Similarly each of these 2048 nodes is fully connected to the output layer. Sorry for the delayed reply. Yes, the detection is rather slow due to the windowing approach I use which applies the net to a large number of windows. I have an idea for fixing it by introducing a new network (roughly based on Joseph ) which is being tracked.

I made a start on fixing this (and the issue is currently assigned to me), but I haven't had chance to work on it lately and haven't got any immediate plans to do so. I'll push my work on this to a separate branch this evening so that others can pick up where I left off. Let me know if you want to pick up where I left off and I'll re-assign, and fill you in on the general direction I was taking. Alternatively, if anyone has a separate idea for how to speed up detection please raise an issue similar to #5 and we can talk about implementation.

Comments are closed.

|

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

- Blog

- Billa south movie in hindi

- Breath of the wild rom pc

- Mudrunner spintires

- Intel visual fortran compiler for windows free download

- Philips wiz

- Nailah blackman we ready mp3 download

- Multiple autonomous codes frc driver station

- Metal saga iso

- 756 pro ii vs pro iii

- Couple poses reference

- Bangla movie song 2015

- How to jailbreak xbox 360 reddit

- Klonoa door to phantomile rom crashes

RSS Feed

RSS Feed